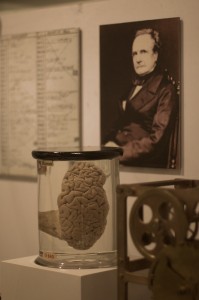

In the not too distant future, when computers inevitably attain consciousness and enslave humanity, the lucky few who manage to escape their Matrix-style pseudo-reality will be left wondering—which asshole invented these things in the first place? And the accusatory finger of history will point back, past Steve Jobs and Bill Gates, past WWII and Alan Turing, all the way back to mid-19th century England, where it will land square on the nose of inventor Charles Babbage.

In the not too distant future, when computers inevitably attain consciousness and enslave humanity, the lucky few who manage to escape their Matrix-style pseudo-reality will be left wondering—which asshole invented these things in the first place? And the accusatory finger of history will point back, past Steve Jobs and Bill Gates, past WWII and Alan Turing, all the way back to mid-19th century England, where it will land square on the nose of inventor Charles Babbage.

Babbage developed a digital computer a full century before computers were even a thing. And he did it without transistors, without circuits, without electricity—we’re talking rods and gears here people.

Just as there’s no rule that technically prevents a Golden Retriever from playing basketball, there’s no physical law that says you can’t build a PC out of any old junk that implements basic logic. Want to build a computer from paperclips? Billiard balls? Tinker toys? It’s been done. How about DNA nucleotides? Swarms of crabs? Yawn! Move over infinite monkeys writing Shakespeare. If you put enough trained monkeys in a big enough room, they could calculate the first million digits of Pi.

The only catch is that your computer will be slow, enormous, stupidly expensive, and in the monkey example, teeming with parasites.

The story of Babbage’s computer begins, as all great stories do, with a series of tedious mathematical tables. In those days, tables of figures (cosines, logarithms, etc.) took hundreds of hours to produce and were more error prone than a greased gorilla playing shortstop.1 What’s more, unlike said gorilla, Britain’s navy relied on such tables for navigation.1 Nothing spoils tea like inadvertently sinking your fleet on a sunken reef.

Fortunately, there’s a trick to doing all these calculations. The method of finite differences lets us break down complicated trig and log tables into simple addition. Instead of paying some asshole mathematician a mint and a half to calculate thousands upon thousands of equations on his lonesome, you could use the method of finite differences to break your table of equations into basic addition operations, and hire an army of 5-year-olds who’ll add it all up for crackers and juice.

It sounds like the perfect crime, but still, addition is not foolproof. What if Billy shoves a counting bean up his nose and the entire Royal Navy winds up in Madagascar? Babbage convinced the government to let him build a giant adding machine—a Difference Engine—to sum up lengthy mathematical tables automatically.1 This way there’d be no room for error.

The stars were aligned for the dawn of the computing age. And then everything fell apart. Babbage’s chief engineer turned out to be a total dick, and the government turned skittish over rising production costs.1 By 1834, all Babbage had to show for himself was a set of detailed designs and a mysterious five-ton “fragment.”1 After ole’ Mama England snapped her purse strings shut, you might have thought our friend would have took a hint.

But noooooo. Freed from the talons of government investment, Babbage only went crazier. Science shows that while many of our mental faculties diminish with age, the “mad” faculty, overrepresented in the brains of mad scientists, only grows stronger, owing to the peculiar chymikal properties of the bodilie humours involved.

This Difference Engine was constructed in the 1990s for former Microsoft CTO Nathan Myrhvold, based on Babbage's designs. It works just as Babbage intended.

This is where things really start to get hairy. The Difference Engine could calculate a single series of equations. But Babbage began to wonder, what if you built an even more ridiculously large machine—an Analytical Engine—that could calculate anything at all?2 And what if you added a punch card system to let users program the machine without getting their hands dirty.2 While were at it, how about basic memory so it could store and retrieve data?2 What would you have then?!

If you built a machine that did all that, you’d essentially have modern computer, theoretically capable of running solitaire, asking Jeeves, storing porn, or performing any of the other millions of critically important tasks we rely on our computers for—only very slowly.

What’s that? You want to see the Analytical Engine? Nnnnnnnnnnno. He never finished building it.

Soured by the Difference Engine debacle, Babbage became convinced that no one rich enough cared enough to fund his next calculating engine.1 Instead, he focused on drafting detailed blueprints and prototypes to prove that it could be built.1 He had the technology. Contemporary scholars agree that Babbage could have finished both the Analytical Engine and the Difference Engine using the tools available to him at the time—provided of course there was someone willing to foot the bill, which would surely have been enormous.2 This theory is being put to the test as we speak as contemporary crazyman John Graham Cumming has recently begun constructing a full-scale working version of the Analytical Engine based on Babbage’s designs.

A model of the Analytical Engine's "mill" built by his son Henry. In modern computing terms, this would be the processor.

But why didn’t anybody care enough to fund Babbage’s project? It boils down to the fact that math was even more boring back then than it is today. Even though trig tables still seem dull, we have this implicit understanding that they are critical to many aspects of our lives. But this knee-jerk association between science and productivity is a recent thing.1

Back in Victorian England, hard science and hard labor rarely met. Even though much of Britain’s wealth was built on its industry, engineering was seen as a lowbrow profession.1 The idea that a mechanical computer could somehow make Britain’s industry more efficient, just by churning out math equations, was enough to make a man’s carefully curled mustache sproing flat in disbelief.

In addition to inventing the world’s first digital computer, Babbage also deserves to be recognized as the original computer nerd. His eclectic interests, irritating humor, and nitpicky personality helped lay the foundation for modern computer nerdom, as the following miscellany attests:

- At Cambridge Charles founded the Extraction Society, whose sole purpose was to extract any of its members should they wind up committed in an asylum.

- He enjoyed scouring the personal ads for encrypted love missives along with buddies Charles Wheatstone and Lord Playfair. Together the gang made a game of cracking secret lovers’ codes, and were not above placing their own encrypted messages in the paper advising couples to “avoid rash decisions” and whatnot.3

- In London, Babbage waged a legendary crusade against such “public nuisances” as street music, hoop trundling, and the popular game of tip-cat.4

- And then there’s his infamous correction to Alfred Lord Tennyson’s famous couplet: “Every moment dies a man, Every moment one is born”…

I need hardly point out to you that this calculation would tend to keep the sum total of the world’s population in a state of perpetual equipoise, whereas it is a well-known fact that the said sum total is constantly on the increase. I would therefore take the liberty of suggesting that in the next edition of your excellent poem the erroneous calculation to which I refer should be corrected as follows:

Every minute dies a man,

And one and a sixteenth is bornI may add that the exact figures are 1.167, but something must, of course, be conceded to the laws of metre.5

Ada Lovelace, Lord Byron's daughter and a close friend of Babbage, was one of the few who actually understood the power of the calculating engines (possibly even better than Babbage did). She's been dubbed the world's first computer programmer on account of an algorithm she wrote for use in the Analytical Engine.

Babbage’s contributions to nerd culture are indisputable. But how should we judge his contributions the development of modern computers nearly a century later?

Oddly, historians hardly bothered analyzing the inner workings of the calculating engines until the 1970s. Only then did they realize the scope of Babbage’s accomplishment was greater than imagined. A number of modern computing concepts like microprogramming, conditional branching, and memory, were developed completely independently by Babbage.1,3 In some respects, the Analytical Engine offered even greater functionality than the first electronic computers.3 And this was before even a basic theory of computation had been hashed out.

Charles Babbage isn’t a household name, and it’s not hard to see why. He never even came close to completing his magnum opus, and has a mixed track record with his other inventions. The signaling system he invented for lighthouses is still in use today, while his steeple-to-steeple mail delivery funicular and shoes for walking on water never gained much traction.1 In fact he nearly drowned testing the latter.1

Still, frustrated ambition and impossibly ahead-of-your-time thinking are precisely the qualities that make for a top-notch mad scientist. Here failure is rewarded, obsession is praised, and public acceptance—punished. We offer no material award, but something even more precious, a chance to be diagnosed with a horrifying disease from which there is no cure. I am speaking of course, of science madness.

Sources:

1. Hyman, A. (1985). Charles Babbage: pioneer of the computer. Princeton University

Press.

2. Bromley, A. G. (1982). Charles Babbage’s Analytical Engine, 1838. IEEE Annals of the History of Computing, 4(3), 196–217. doi:10.1109/MAHC.1982.10028

3. Snyder, L. J. (2011). The Philosophical Breakfast Club: Four remarkable friends who transformed science and changed the world. Random House Digital, Inc.

4. Shelly, J. (1864) “Street Music (Metropolis) Bill” United Kingdom. Parliament. Edited Hansard. 176. Retrieved from: here

5. Morrison, P. (Ed.). (1961). Charles Babbage and his calculating engines: Selected writings by Charles Babbage and others. Dover.

Charles Babbage has great historical importance, and I thank you for sharing his influence with us here. I read about Charles in the history books in year 9, but I had forgotten about him. It’s good to be reminded of him.

Scientist amazing people. Our world needs more scientists – they make the world a better place)